|

What is LON-CAPA? Who is LON-CAPA? Documentation Installation Scholarship Developers Events |

|

The LearningOnline Network with CAPA |

|

| Home > What is LON-CAPA? > ... > Comparison of Homework Functionality | |

Comparison of Homework Functionality

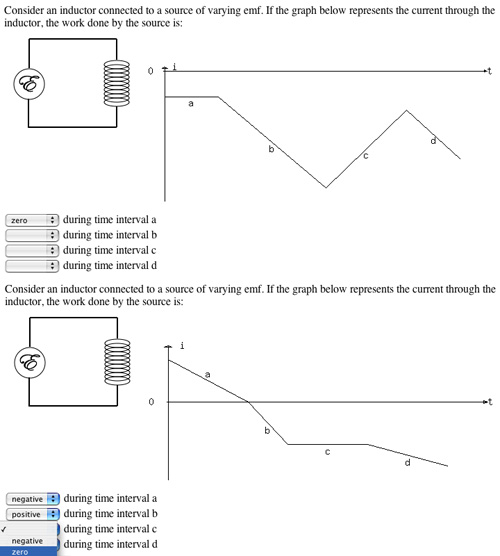

In 1992 CAPA (a Computer-Assisted Personalized Approach) was started at Michigan State University in a small introductory physics course as a way to provide randomized homework with immediate feedback. Different students would have different versions (for example, different numbers and options) of the same problem, so that they could discuss problems with each other, but not simply exchange the solution. As an example, the figure on the right shows two versions of the same homework problem, as seen in LON-CAPA today. It might be argued that different randomizations beyond simple changing of numerical values may result in problems of different difficulty, and a problem like the one in the figure would be unfair in an exam setting. However, when used in homework settings, higher randomization (including major variation of the scenario) leads to more fruitful online discussions.

When CAPA was first introduced, students would receive a printout of the problems and would enter their solutions through a terminal. In later years, a web interface for answer input was introduced. Almost in parallel, starting in 1991, the University of Texas Homework Service (now Quest  ), this concern could not be resolved, as several universities are interpreting the law as prohibiting storing grade-relevant information off-campus or out-sourcing services handling such data. ), this concern could not be resolved, as several universities are interpreting the law as prohibiting storing grade-relevant information off-campus or out-sourcing services handling such data.

CAPA's distribution principle brought some challenges that the UT Service did not encounter. Because editing new problems is a time-consuming task, and because introductory physics problems are very similar, faculty at different institutions soon started to exchange problem libraries with each other. But because they had separate installations, such exchanges meant sending the associated files via FTP or exchanging floppy disks. Overcoming this infrastructural shortcoming was one of the main design principles in the next generation of CAPA, which is now LON-CAPA.

Other technical implementation issues of CAPA, such as (at the time) dependence on X-Windows for problem editing and course management, prompted a team at the University of Rochester to develop WeBWorK In summary, CAPA, the UT Homework Service, WeBWorK, and WebAssign offer very similar problem functionality with comparable randomization features. The systems differ in their distribution mechanisms their technology choices (CAPA and the UT Homework Service initially were strongly driven by paper-based assignments and terminal input, and only later added web interfaces, while WeBWorK and WebAssign were web applications from the start), their problem editing interfaces (CAPA, the UT Homework Service, and WeBWork offer programming languages (own implementation, enhanced C, and enhanced Perl, respectively), and WebAssign uses templates). CAPA, the UT Homework Service, and WeBWorK are free, and WebAssign is a commercial product.

In addition to these more traditional homework systems, online tutorial systems, most notably CyberTutor |

|

Contact Us: lon-capa@lon-capa.org Site maintained by Gerd Kortemeyer. |

|

| ©2013 Michigan State University Board of Trustees. | |